Suppose we have n positive numbers w 1, w 2. ) assign codewords not to individual symbols but to strings of symbols,Īllowing them to achieve better and more robust compressions in many applications.Īpplications of Huffman’s algorithm are not limited to data compression. The coding tree is updated each time a new symbol is read from the source text.įurther, modern alternatives such as Lempel-Ziv algorithms (e.g.,

This drawback can be overcome by using dynamic Huffman encoding, in which Include the coding table into the encoded text to make its decoding possible. This scheme makes it necessary, however, to Then these frequencies are used to construct a Huffman coding tree and encode Scanning of a given text to count the frequencies of symbol occurrences in it. Simplest version of Huffman compression calls, in fact, for a preliminary Versatility, it yields an optimal, i.e., minimal-length, encoding (provided theįrequencies of symbol occurrences are independent and known in advance). The most important file-compression methods. (Extensive experiments with Huffman codes have shown that the compression ratioįor this scheme typically falls between 20% and 80%, depending on theĬharacteristics of the text being compressed.) In other words, Huffman’sĮncoding of the text will use 25% less memory than its fixed-length encoding. Ratio-a standard measure of a compression algorithm’s effectiveness-of ( 3 − 2. Thus, for this toy example, Huffman’s code achieves the compression Įncoding for the same alphabet, we would have to use at least 3 bits per each With the occurrence frequencies given and the codeword lengthsĢ. The five-symbol alphabet with the following occurrence frequencies in aġ0011011011101 is decoded as BAD _ AD. Sum of their weights in the root of the new tree as its weight.Ībove algorithm is called a Huffman tree. Make them the left and right subtree of a new tree and record the Weight (ties can be broken arbitrarily, but see Problem 2 in this section’sĮxercises). Step 2 Repeat the following operation until a single tree is obtained. The sum of the frequencies in the tree’s leaves.) (More generally, the weight of a tree will be equal to

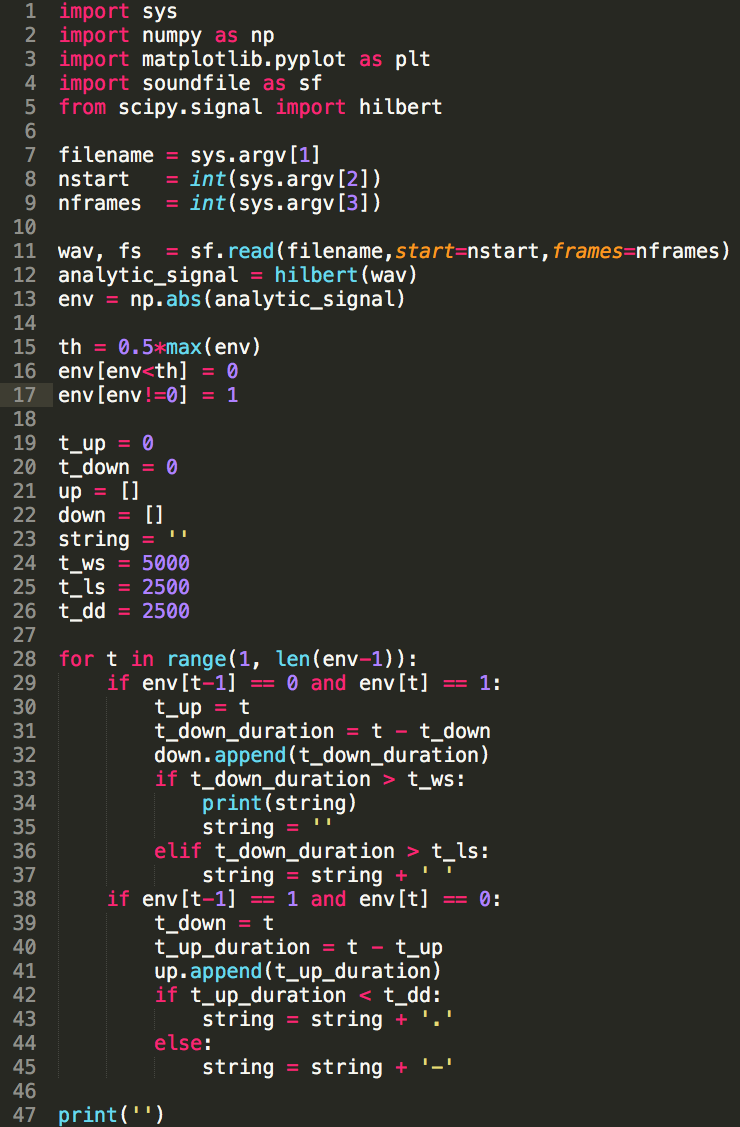

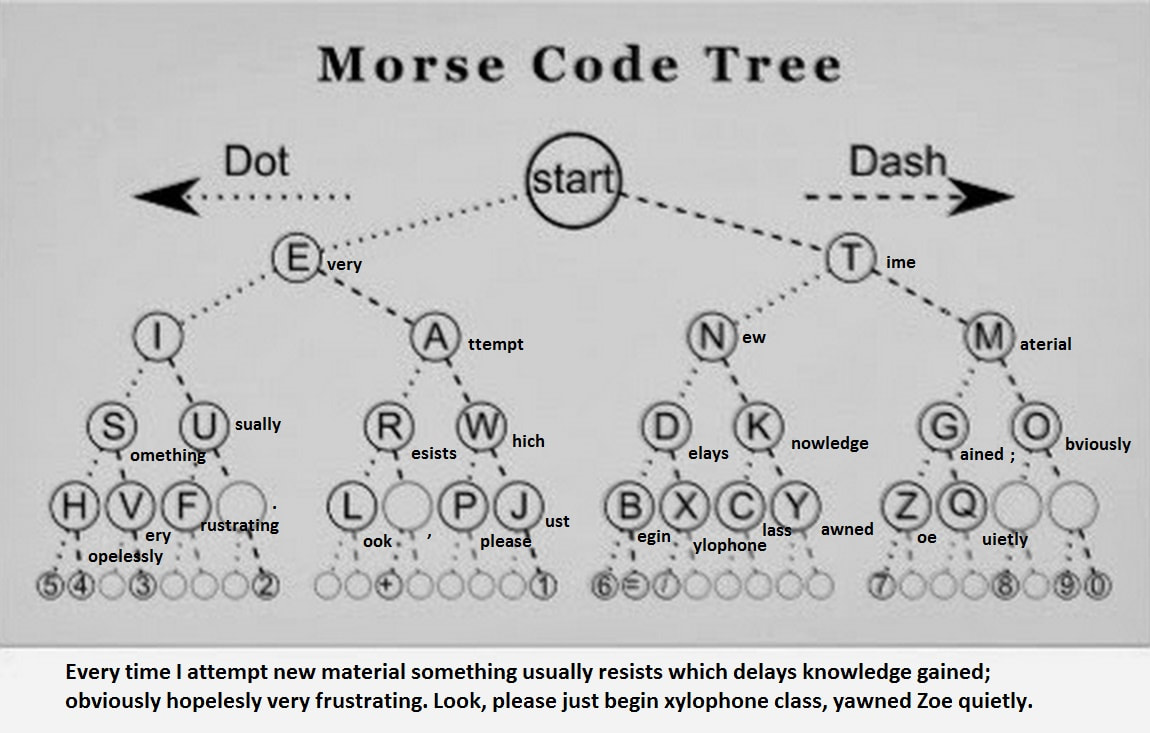

Record the frequency of each symbol in its tree’s root to indicate the Step 1 Initialize n one-node trees and label them It can be done by the following greedy algorithm, invented by David Huffman The symbol occurrences, how can we construct a tree that would assign shorterīit strings to high-frequency symbols and longer ones to low-frequency symbols? Since there is no simple path to a leaf that continues toĪnother leaf, no codeword can be a prefix of another codeword hence, any suchīe constructed in this manner for a given alphabet with known frequencies of The codeword of a symbol can thenīe obtained by recording the labels on the simple path from the root to the Symbols with leaves of a binary tree in which all the left edges are labeled byĠ and all the right edges are labeled by 1. Prefix code for some alphabet, it is natural to associate the alphabet’s This symbol, and repeat this operation until the bit string’s end is reached. Hence, with such an encoding, we can simply scan a bit string until we get theįirst group of bits that is a codeword for some symbol, replace these bits by In a prefix code, no codeword is a prefix of a codeword of another symbol. Limit ourselves to the so-called prefix-free (or simply prefix) Text represent the first (or, more generally, the i th) symbol? To avoid this complication, we can Namely, how can we tell how many bits of an encoded Variable-length encoding, which assigns codewords ofĭifferent lengths to different symbols, introduces a problem that fixed-lengthĮncoding does not have. − ) are assigned short sequences of dots andĭashes while infrequent letters such as q ( − −. In that code, frequent letters such as e (. This idea was used, in particular, in the telegraph code invented in Shorter bit string on the average is based on the old idea of assigning shorterĬode-words to more frequent symbols and longer codewords to less frequent One way of getting a coding scheme that yields a For example, weĬan use a fixed-length encoding that assigns to each symbol a bit string

Text’s symbols some sequence of bits called the codeword. Text that comprises symbols from some n -symbol alphabet by assigning to each of the

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed